IV. DESCRIBING PLACES: THE IMAGE PROCESSING MODULE 4 地点描述:图像处理模块

4.1 paragraph

Visual place description techniques fall into two broad categories:

基于视觉的地点描述技术分为两大类:

those that selectively extract parts of the image that are in some way interesting or notable;

一类是选择性地提取一些有意义的图像部分;

and those that describe the whole scene, without a selection phase.

另一类是无选择性地描述整个场景。

Examples of the first category are local feature descriptors such as scale-invariant feature transforms (SIFT) [72] and speeded-up robust features (SURF) [73].

局部特征描述子属于第一类,例如尺度不变特征(SIFT)[72]和加速鲁棒特征(SURF)[73]。

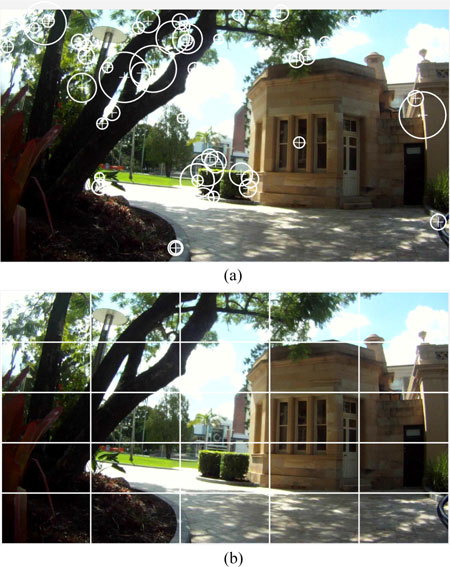

Local feature descriptors first require a detection phase which determines the parts of the image to retain as local features [see Fig. 4(a)].

局部特征描述子首先要检测图像中的局部特征[见图 4(a)]。

In contrast, global or whole-image descriptors such as Gist [74], [75] do not have a detection phase but process the whole image regardless of its content [see Fig. 4(b)].

而全局图像描述子,如Gist [74],[75]没有检测阶段,但需要处理整张图像,但不关心图像的内容[见图4(b)]。

A. Local Feature Descriptors

A.局部特征描述子

Fig. 4. Visual place description techniques fall into two broad categories.

图4 基于视觉的地点描述技术分为两大类。

(a)Interesting or salient parts of the image are selected for extraction, description and storage.

a)选择图像中感兴趣或突出显著的部分用来提取,描述和存储。

For example, SURF [73] extracts interest points in an image for description.

例如,SURF [73]提取并描述图像中的兴趣点。

The circles are interest points selected by SURF within this image.

圆圈中是该图像内的SURF选择的兴趣点。

The number of possible features may vary depending on the number of interest points detected in the image.

特征的数量取决于图像中检测到的感兴趣点。

(b) Image is described in a predefined way such as the grid shown here without first detecting interest points.Whole-image descriptors such as Gist [74], [75] then process each block regardless of its content.

(b)以预定方式描述图像,例如通过对图像栅格化,然后利用全图像描述子,如Gist算法[74],[75],这里的网格,而不是先检测兴趣点。全图像描述子,如Gist直接处理每个栅格,而不管图片内容,整个过程没有先检测兴趣点不管图片内容,直接处理每个块。

4.1.1 paragraph

The development of the local feature method SIFT [72] led to its widespread use in place recognition [76]–[83].

SIFT [72]在场景识别中应用广泛 [76] - [83]。

As other local feature detection and description methods were developed, they too were applied to the visual localization and place recognition problem.

随着技术的发展,其他局部特征检测和描述方法也用于视觉定位和场景识别问题中。

For example, Ho and Newman [84] used Harris affine regions [85], Murillo et al. [86] and Cummins and Newman [87] used SURF [73], while FrameSLAM [2] used CenSurE [88].

例如,Ho和Newman [84]使用了Harris affine regions[85],Murillo等人 [86]和Cummins与Newman[87]使用了SURF [73],而FrameSLAM [2]使用了CenSurE [88]。

Since local feature extraction consists of two steps—detection followed by description—it is not uncommon to combine different techniques for each.

局部特征提取包含特征检测与描述子提取两个步骤,每个步骤可以使用不同的技术。

For example, Mei et al. [89] used the detection technique FAST [90] to find keypoints in the image, which were then described by SIFT descriptors.

例如,Mei等人 [89]使用FAST检测方法 [90]来找到图像中的关键点,然后通过SIFT描述子进行描述。

Similarly, Churchill and Newman [15] used FAST extraction combined with BRIEF [91] descriptors.

类似地,Churchill and Newman [15]使用FAST提取,再用BRIEF [91]描述。

4.1.2 paragraph

Each image may contain hundreds of local features, and directly matching image features can be inefficient.

由于每张图像都包含数百个局部特征,所以直接匹配图像特征效率很低。

The bag-ofwords model [92], [93] increases efficiency by quantizing local features into a vocabulary that can be compared using text retrieval techniques [94].

为了提高匹配效率,文献[92][93]利用词袋模型,将局部特征量化为词汇信息,然后通过文本检索技术[94]来进行匹配[92] [93]通过量化局部特征,使其变为检索词汇 [94]来提高效率。

The bag-of-words model partitions a feature space, such as SIFT or SURF descriptors, into a finite number of visual words.

词袋模型将特征空间(例如SIFT或SURF描述子)分成划分成数量有限的一些视觉词汇。

A typical vocabulary contains 5000–10 000 words, but a vocabulary as large as 100 000 words has been used for place recognition by FAB-MAP 2.0 [87].

一般词汇量为5000–10000字,但FAB-MAP 2的词汇量有100000个[ 87]。

For each image, every feature is assigned to a particular word, ignoring any geometric or spatial structure, thereby allowing images to be reduced to binary strings or histograms of length n, where n is the number of words in the vocabulary.

图像中的每个特征点为一个特定的词,然后忽略几何或空间结构,将图像压缩到二进制串或长度为n的直方图,其中n是词库中的单词数量。

4.1.3 paragraph

Images described using the bag-of-words model can be efficiently compared using binary string comparison such as a Hamming distance or histogram comparison techniques.

对于使用_bag-of-words描述的图像,使用能够通过二进制串匹配(如汉明距离或直方图对比技术)达到较好的_效果较好。

Vocabulary trees [95] can make the process for large-scale place recognition even more efficient.

词汇树[95]可以提高大规模场景识别的效率。

Originally proposed for object recognition, vocabulary trees use a hierarchical model to define words, an approach that enables faster lookup of visual words and the use of a larger and thus more discriminating vocabulary.

最初,词汇树是针对目标识别提出的,通过分层模型来定义词,建立更大的和易分辨的词汇库,提高了查找视觉词汇的速度。

Localization systems that use the bag-of-words approach include [82], [84], [87], [96], [97] and many others.

使用bag-of-words方法的定位系统包括[82],[84],[87],[96],[97]等等。

4.1.4 paragraph

Because the bag-of-words model ignores the geometric structure of the place it is describing, the resulting place description is pose invariant;

因为bag-of-words忽略了地点的几何结构,所以该方法的地点描述具有姿态无关性,

that is, the place can be recognized regardless of the position of the robot within the place.

即,无论机器人在该地点内的位置如何,都能够识别。

However, the addition of geometric information to a place has been shown to improve the robustness of place matching, particularly in changing conditions [14], [87], [98]–[100].

然而,向地点描述中添加几何信息可以提高匹配的鲁棒性,尤其是在动态环境中[14],[87],[98] - [100]。

These systems may assume a laser sensor is available for 3-D information [98], use stereo vision [14], epipolar constraints [100], [101], or simply define the scene geometry according to the position of the elements within the image [102], [103].

这些系统能够通过激光传感器获取3D信息[98],再使用立体视觉[14],对极约束[100],[101]或者图像内元素的位置来定义场景的几何特性[102],[103]。

The tradeoff between pose invariance— recognizing places regardless of the robot orientation—and condition invariance—recognizing places when the visual appearance changes—has not yet been resolved and is a current challenge in place recognition research.

但是到目前为止,姿态无关性(场景识别与机器人方向无关)以及环境外界条件无关性(外观信息变化时的场景识别)之间如何权衡问题还有待解决,这也是当前位置识别研究领域中的一大挑战。

4.1.5 paragraph

The bag-of-words model is typically predefined based on features extracted from a training image sequence.

我们可以从训练图像序列中提取特征,来预构建定义Bag-of-words模型。

This approach can be limiting as the resulting model is environment dependent and needs to be retrained if a robot is moved into a new area.

但该方法具有局限性,因为所得到的模型依赖于环境,如果机器人移动到新的区域,则需要重新训练。

Nicosevici and Garcia [56] propose an online method to continuously update the vocabulary based on observations, while still being able to match prior observations with future observations.

Nicosevici和Garcia [56]提出了一种在线方法,根据观测结果不断更新词汇,同时仍然能与已有的观测信息进行匹配。

As a result, a bag-of-words model can be used without requiring a pretraining phase and can adapt to the environment, outperforming pretrained models despite requiring less a priori knowledge [56].

因此, bag-of-words不需要预训练阶段也能适应环境,而且效果优于预训练模型[56]。

B. Global Descriptors B. 全局描述子

4.B.1 paragraph

Global place descriptors used in early localization systems included color histograms [5] and descriptors based on principal component analysis [104].

早期定位系统中使用的全局地点描述子包括颜色直方图[5]和主成分分析 [104]。

Lamon et al. [105] used a variety of image features—such as edges [106], corners [107], and color patches—combined into a fingerprint of a location.

Lamon等人 [105]使用各种图像特征,如边缘[106],角点[107]和色块进行组合形成一个位置的指纹特征。组合成位置指纹。

By ordering these features in a sequence between 0◦ and 360◦, place recognition could be reduced to string-matching. These systems used omnidirectional cameras which allowed otation-invariant matching at each place.

通过将特征在0°和360°之间顺序排列,可以将使得位置识别问题能够简化成一个字符串匹配问题。系统使用全景相机,所以每个地点的匹配具有旋转不变性。

4.B.2 paragraph

Global descriptors can be generated from local feature descriptors by predefining the keypoints in the image—for example, using a grid-based pattern—and then using the chosen feature description method on the preselected keypoints.

全局描述子能够通过局部特征生成,即在局部图片(比如图像中划分出的网格)上找出关键点,然后描述其局部特征,再把这些局部特征描述子结合起来,形成全局描述子。

Badino et al. [108] used whole-image descriptors based on SURF features known as WI-SURF to perform localization and BRIEFGist [109] used BRIEF features [91] in a similar whole-image fashion.

Badino等人[108]使用WI-SURF(SURF特征)全局图像描述子来定位,BRIEF-Gist(RIEF特征) [109]也是类似的全局图像方式。

4.B.3 paragraph

A popular whole-image descriptor is Gist [74], [75], which has been used for place recognition on a number of occasions [110]–[113].

Gist全局图像描述子 [74],[75] 比较流行,已用于很多场合的场景识别[110] - [113]。

Gist uses Gabor filters at different orientations and different frequencies to extract information from the image. The results are averaged to generate a compact vector that represents the “gist” of a scene.

Gist使用Gabor滤波器,以不同的方向和频率,从图像中提取信息,将结果平均,产生一个简洁向量,表示场景的“依据”。

C. Describing Places Using Local and Global Techniques

C. 基于局部和全局技术的描述地点

4.C.1 paragraph

Local and global descriptors each have different advantages and disadvantages.

局部和全局描述子各~~~~有优缺点。

Local feature descriptors are not restricted to defining a place only in terms of a previous robot pose, but can be recombined to create new places that have not previously been explicitly observed by the robot.

局部特征不仅可以定义与先前机器人姿态位置相关的场景地点,也可以重新组合来创建未被明确观测的场景地点。

For example, Mei et al. [114] defined places via covisibility: the system finds cliques in the landmark covisibility map, and these cliques define places even when the landmarks have not simultaneously been seen in a single frame.

例如,Mei等人 [114]通过covisibility定义场景地点:系统在地标covisibility map(共视图)中定义cliques(各种局部特征点结合在一起形成一个团体),即使当前图片中没有地标,通过 cliques也能进行场景识别。

Covisibility can outperform standard imagebased techniques [78]. Lynen et al. [115] generated a 2-D space of descriptor votes where regions of high vote density represent loop closure candidates.

Covisibility技术优于一般的图像处理技术[78]。Lynen 等人[115]建立二维描述子投票空间,其中高投票密度的区域表示候选闭环。

Fig. 5. Object proposal methods such as the Edge Boxes method [123] shown here were developed for object detection but can also be used to identify potential landmarks for place recognition.

图 5似物性采样(object proposal)方法,如此处所示的Edge Boxes法[123]用于目标检测,也可用于识别潜在的地标。

The boxes in the images above showlandmarks that have been correctly matched between two viewpoints of a scene (from [122]).

上面图像中的方框表示在一个场景中两个视点(来自[122])之间,正确匹配的地标。

4.C.2 paragraph

Local features can also be combined with metric information to enable metric corrections to localization [2], [7], [76].

局部特征与度量信息结合可以对定位进行度量修正[2],[7],[76]。

Global descriptors do not have the same flexibility, and furthermore, whole-image descriptors are more susceptible to change in the robot’s pose than local descriptor methods, as whole-image descriptor comparison methods tend to assume that the camera viewpoint remains similar.

全局描述子没有此功能,此外,在机器人的姿态改变时,整体图像描述子比局部描述子更易发生变化,整体图像描述方法倾向于假定相机视点相似。

This problem can be somewhat ameliorated by the use of circular shifts as in [116] or by combining a bag-of-words approach with a Gist descriptor on segments of the image [17], [110].

循环移位[116]或词袋方法,与图像块上的Gist结合[17],[110]可以改善该问题。圆周式运动[116]的方法或者结合词袋模型Gist描述子[17],[110]可以在一定程度上改善全局描述子姿态依赖的问题。

4.C.3 paragraph

While global descriptors are more pose dependent than local feature descriptors, local feature descriptors perform poorly when lighting conditions change [117] and are comprehensively outperformed by global descriptors at performing place recognition in changing conditions [118], [119].

虽然全局描述相比于局部特征描述更具有姿态依赖性,尽管如此,但是当光照条件改变时,局部描述子的特征表现变差[117],在变化条件下执行场景识别时,全局描述子的性能更胜一筹[118] [119]。

Using global descriptors on image segments rather than whole images may provide a compromise between the two approaches, as sufficiently large image segments exhibit some of the condition invariance of whole images, and sufficiently small image segments exhibit the pose invariance of local features.

在图像块上使用全局描述子可以折中两种方法,足够大的图像块表现出整体图像的条件不变性,而足够小的图像块表现出局部特征的姿态不变性。

McManus et al. [120] used the global descriptor HOG [121] on image patches to learn condition invariant scene signatures, while S¨underhauf et al. [122] used the Edge Boxes object proposal method [123] combined with a mid-level convolutional neural network (CNN) feature [124] to identify and extract landmarks as illustrated in Fig. 5.

McManus 等人 [120]在图像块上使用HOG [121]来学习条件不变性的场景特征,而Sünderhauf等人 [122]使用Edge Boxes似物性采样方法结合中级卷积神经网络(CNN)特征[124][123]来识别和提取图5所示的地标。

D. Including Three-Dimensional Information in Place Descriptions

D.地点描述子中的三维信息

4.D.1 paragraph

The image processing techniques described above are appearance based—they “model the data directly in the visual domain (instead of making a geometric model)” (see [104]).

上述图像处理技术是基于环境外观的 ——它们“直接在视觉域中对数据建模(而不是建立几何模型)”(见[104])。

However, in metric localization systems, the appearance-based models must be extended with metric information.

然而,在度量定位系统中,外观模型必须根据度量信息进行扩展。

Monocular image data is not a natural source of geometric landmarks—“the essential geometry of the world does not “pop out” of images the same way as it does from laser data” (see [125]).

单目图像数据不能直接提供几何地标——“与激光数据不同,不能从图像中获取世界几何信息(见[125])。

While many systems use data from additional sensors such as lasers [98] or RGB-D cameras [126]–[128], geometric data can also be extracted from conventional cameras to allow metric calculation of the robot pose.

许多系统使用附加传感器数据(如激光器[98]或RGB-D相机[126] - [128]),或者从常规相机计算出的几何数据,来判断机器人姿态。

4.D.2 paragraph

Metric range information can be inferred using stereo cameras [2], [129]–[131].

度量距离可以使用立体相机采集的图片来计算[2],[129] - [131]。

Monocular cameras can also infer metric information using Structure-from-Motion algorithms [132].

单目摄像机还可以使用SFM算法[132]来推断尺度信息。

Methods include MonoSLAM [7], PTAM [133], DTAM [134], LSD-SLAM [135], and ORB-SLAM [136].

其他方法还包括MonoSLAM [7],PTAM [133],DTAM [134],LSD-SLAM [135]和ORB-SLAM [136]。

Metric information can be sparse: that is, range measurements are associated with local features such as image patches as in MonoSLAM [7], SIFT features as in [76], CenSurE features as in FrameSLAM [2], or ORB features [137] as in ORB-SLAM [136].

度量信息可能是稀疏的:也就是说,测量距离与局部特征有关,如MonoSLAM [7]中的图像块,如[76]中的SIFT特征,FrameSLAM [2]中的CenSurE特征以及在ORB-SLAM [136]中的ORB特征[137]。

In contrast, DTAM stores dense metric information about every pixel, and LSD-SLAM maintains semidense depth data on the parts of the image containing structure and information.

相反,DTAM存储所有像素的度量信息(稠密),LSD-SLAM从包含结构和信息的图像块上提取深度数据(半稠密)。

Dense metric data allow a robot to perform obstacle avoidance and metric planning as well as mapping and localization; therefore, fully autonomous vision-only navigation can be performed [16].

通过稠密度量数据,机器人可以避障、数据规划建图和定位,因此,可以自主视觉导航[16]。

4.D.3 paragraph

The introduction of novel sensors, such as RGB-D cameras, that provide dense depth information as well as image data has spurred the development of dense mapping techniques [70], [126]–[128], [138], [139].

通过新型传感器,如RGB-D相机,检测深度信息和图像数据,促进了稠密地图技术的发展[70],[126] - [128],[138],[139] 。

These sensors can also exploit 3-D object information to improve place recognition.

这些传感器还可以利用3D信息来改善位置识别。

SLAM++ [70] stores a database of 3-D object models, uses this database to perform object recognition during navigation, and uses these objects as high-level place features.

SLAM ++ [70]存储一个3D模型数据库,在导航期间,使用该数据库进行物体识别,并用识别出的物体作为地点的高级特征。

Objects have a number of advantages over low-level place features: they provide rich semantic information and can reduce memory requirements via semantic compression, that is, storing object labels rather than full object models in the map [70].

物体信息相对于低级别地点特征有以下优点:它们提供丰富的语义信息,通过语义压缩减少内存需求——在地图中存储物体标签而不是完整的物体模型[70]。